Overview of Steppingstones Cognitive Research

This article provides a brief overview of the findings and observations of a cognitive accessibility survey. Funding for this project provided by the Office of Special Education and Rehabilitative Services Steppingstones of Technology Innovation Grant #H327A070057.

Introduction

Creating accessible websites for users with learning and cognitive disabilities is an area with little research and few concrete recommendations. While web developers can apply universal principles of web accessibility to benefit all users with sensory or physical disabilities, the application of cognitive accessibility is varied and complex. Due to the limited research and complexity of cognitive web accessibility, common techniques to increase usability for those with cognitive and learning disabilities are hard to come by, despite the fact that the number of users with these disabilities far exceeds the number of people with other types of physical and sensory disabilities.

The Project

WebAIM, through a Steppingstones of Technology grant (U.S. Department of Education), has undertaken a project to assist web developers in creating web sites that are highly accessible to users with cognitive and learning disabilities. The goals of this project are to research recommendations and expert opinion on cognitive web accessibility, test the impact of selected recommendations, implement a set of best practice rules into evaluation tools, and report on our findings. Our goal is to implement and report on real strategies that web developers could implement to increase the accessibility of their web pages.

Research Approach

WebAIM conducted a thorough literature review and then filtered expert recommendations to identify key aspects of design that are broadly applicable, of benefit to web developers, and machine testable. The test items had high effects on the largest number of people with cognitive disabilities, were very useful for web developers, and could be automatically tested. The resulting list of items was tested with users with cognitive and learning disabilities:

- Font size

- Headings

- Images

- Line length

- Lists

- Multimedia

- Reading level

- Search

- Font type

Subjects of this testing were 8 students with cognitive or learning disabilities that were age 12 to 17 and in grades 6 to 11. Each student reported frequent use of the web.

Each item above was tested through measuring efficiency, accuracy, and satisfaction in completing web-based tasks on pairs of web pages that isolated the test items. For example, one page might have adequate font size while another had a small font size. Other variables (distracters, reading level, complexity, randomness of presentation, etc.) were accounted for – in other words, we tried to make the only thing different between the pages be the element we were testing.

Research Findings

Font size

We tested one page that had small font size with a page that had slightly above average font size. Seven of the eight volunteers were more efficient (correctly found relevant information) with the larger font size than average font size. Larger font size appeared to have a positive affect on effectiveness (they found the information more easily) and user satisfaction (they indicated that the larger font page seemed easier or was more efficient).

Images

We tested one page that had images corresponding with the text and another without images. Seven of the eight volunteers were more efficient and seven of the eight were more satisfied with the inclusion of images.

Line length

We tested three pages with different line lengths: short (~25 characters), average (~75 characters), and long (~120 characters). The difference in efficiency between an average line length and a short line length is inconclusive. However, the pages with both average and short line length were dramatically more efficient than the page with a long line length; all 8 students took the longest time to interacting with long line lengths. Despite being the same length, some students commented that the length of the pages appeared different - with the longer lined pages being shorter. In other words, while some students perceived the long line length page as being shorter than the others, it actually took them much longer to read it.

Multimedia

We tested one page with video instructions against a page with written instructions. Seven of the eight students were more efficient and also expressed more user satisfaction from the page with the video instructions than the one with written instructions.

Less conclusive elements

Tests on the following items did not appear to significantly impact student performance. Please keep in mind that we worked with a small number of students. Greater participation might have changed this result.

- Headings - one page with headings against one without. Students were slightly more accurate, efficient, and satisfied with the pages that did not have headings. The succinctness of the page content may have caused the headings to be more of a distraction than a benefit to some students.

- Lists - one page with a list against one without a list. While there was little difference in accuracy or efficiency of completing the task (see observations below for insight into this), students were much more satisfied with the page that contained the list and indicated that it seemed easier.

- Reading level - one page with a higher reading level and one with a lower reading level. While students completed the tasks slightly more efficiently with the higher reading level page, there was no difference in accuracy or indicated satisfaction. Again, the sample of text was not sufficiently large to gain a highly accurate measure of reading speed.

- Search - the ease of finding a web page in a site—once with search and the other time with click-through navigation. There were varied results to this test. Spelling out the search terms proved difficult and time consuming for some participants. Others had difficulty choosing ""the best" search result from the list. Some students had difficulty completing the task without search (see observations below).

- Font type - one page with serif type against one with sans-serif type. The difference in reading speed and satisfaction between serif and sans-serif fonts was very small.

Observations

In many ways the general observations we made while testing the students were just as, if not more, valuable and surprising than the measurable data we collected in each test.

Perceived difficulty

The perceived difficulty of a page may have had a direct influence on how difficult the page actually was for the participant. For example, almost all students were more efficient and more satisfied with the pages with larger fonts vs. the ones with smaller fonts. However, students commented that the pages with larger fonts were easier because they contained less text. In reality, both pages had identical word counts. The larger font size made the pages appear to have less text - and students indicated that these pages were easeier for this reason, not because they had large fonts. Thus, it is difficult to isolate whether it was the font size that actually made a difference in efficiency of task completion or if it was the student perception of difficulty that made the difference.

Lesson learned: Make your page appear easy to use.

Confirmation for confidence

Participants were given a specific objective for each test case page. This was used to take measurements of accuracy and efficiency, but it also gave us a way to observe how the problem solving processes was implemented by those with cognitive disabilities. Often the student would find the answer but would very cautiously recheck multiple times that they had indeed found the correct answer before signaling their response.

One example of this occured when testing the "search" item. Our preliminary research indicated that the presence of a search mechanism should be helpful for our target users. Some students, however, found it overwhelming when they engaged in a search and were presented with numerous search results. They showed difficulty and apprehension in choosing the correct page from those search results. As a result, while utilizing "search" as a design element was easier, the efficiency of completing the task was affected by the efforts of the student to accomplish the task accurately. It wasn't a matter of finding the correct answer, it was a matter of choosing the correct answer. Providing the results in a less overwheliming and more intuitive way, providing mechanisms for the user to easily recover from errors, and making the outcome of the task less vital may have affected the actual efficiency of completing the task.

Lesson learned: Simplicity, error recovery, and intuitiveness can increase efficiency and confidence.

Distractions

Most developers are aware that people with certain cognitive or learning disabilities can be easily distracted. The usual suspects - ads, flashing images, very bright colors, high contrast, etc. are readily apparent. The more difficult distractions to avoid are those that are also learning aids.

Most developers are aware that people with certain cognitive or learning disabilities can be easily distracted. The usual suspects - ads, flashing images, very bright colors, high contrast, etc. are readily apparent. The more difficult distractions to avoid are those that are also learning aids.

For instance, images can complement text and aid learning. However, this media can be a distracter in subtle ways. The image to the right seems innocuous enough, but the text "In Congress..." appears to be nearly cut off at the top. We found that little distractions such as this were a hindrance as well.

Lesson learned: Keep visual aids clean.

Self-paced

In most cases, as was seen in our study, multimedia can be an immense help, especially in cases where instructions are hard to describe. However, the use of multimedia can introduce other issues. Some students appeared to be rushed or unable to keep up with the video instructions in the multimedia test case (tying a knot via text instructions or via multimedia demonstration).

Lesson learned: A text alternative, a prominent pause feature, and an ability to quickly rewind or replay the video allow users to use multimedia to go at their own pace and take in all of the information.

Consistency and organization

Two examples of consistency and organization affecting cognitive accessibility were unexpectedly discovered in our testing.

During one test, students were asked to find a page about hockey. The hockey link—found on the sports page—stood out and was very visually significant from all other links on the page. It was styled dark blue with light text instead of black text on a light green background and had a very prominent picture of a hockey player.

In the majority of cases, the styling difference may have made the hockey link seem to disappear, perhaps because it wasn't consistent with the other sport links. The special styling on the hockey button appeared to have the opposite effect of what the website authors intended (as far as our users with cognitive disabilities are concerned) – instead of drawing attention to the link, it made the link much less noticeable because of the lack of consistency. In some cases, students never found this link despite the fact that is was stylistically the most prominent link on the page.

Lesson learned: Sometimes making something more visually obvious also makes it so much different that it can be difficult to find.

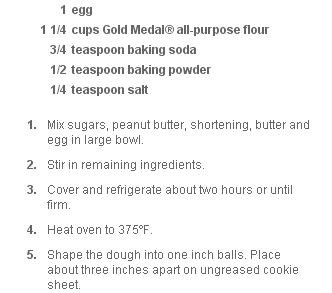

The test pages for the "list" item required students to identify information found within the directions in a recipe. One page provided the instructions in an ordered list and one page provided them in a paragraph. While the ordered list improved the accessibility of the instructions for the majority of subjects, it also caused confusion in others. The numbers before the list of ingredients and the numbered list looked too similar, making it difficult for some to tell where the ingredients stopped and the instructions list began. The organization was not differentiable to some subjects.

The test pages for the "list" item required students to identify information found within the directions in a recipe. One page provided the instructions in an ordered list and one page provided them in a paragraph. While the ordered list improved the accessibility of the instructions for the majority of subjects, it also caused confusion in others. The numbers before the list of ingredients and the numbered list looked too similar, making it difficult for some to tell where the ingredients stopped and the instructions list began. The organization was not differentiable to some subjects.

Lesson learned: While organizational elements (headings, lists, etc.) can help accessibility, they should be clearly differentiable from other elements.